Up to this point we have had to test all the ATbar plugins manually for both languages. This takes time and there is no way of recording the results in order to share the information other than in Excel spreadsheets or Word documents.

Up to this point we have had to test all the ATbar plugins manually for both languages. This takes time and there is no way of recording the results in order to share the information other than in Excel spreadsheets or Word documents.

Online T esting

esting

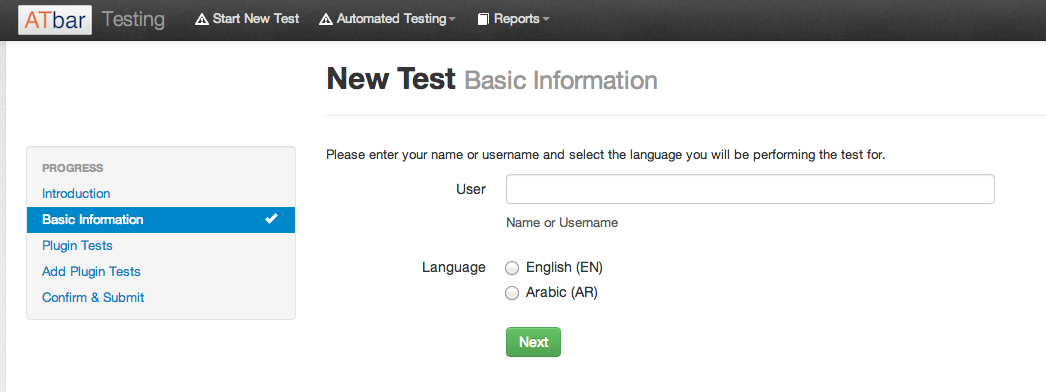

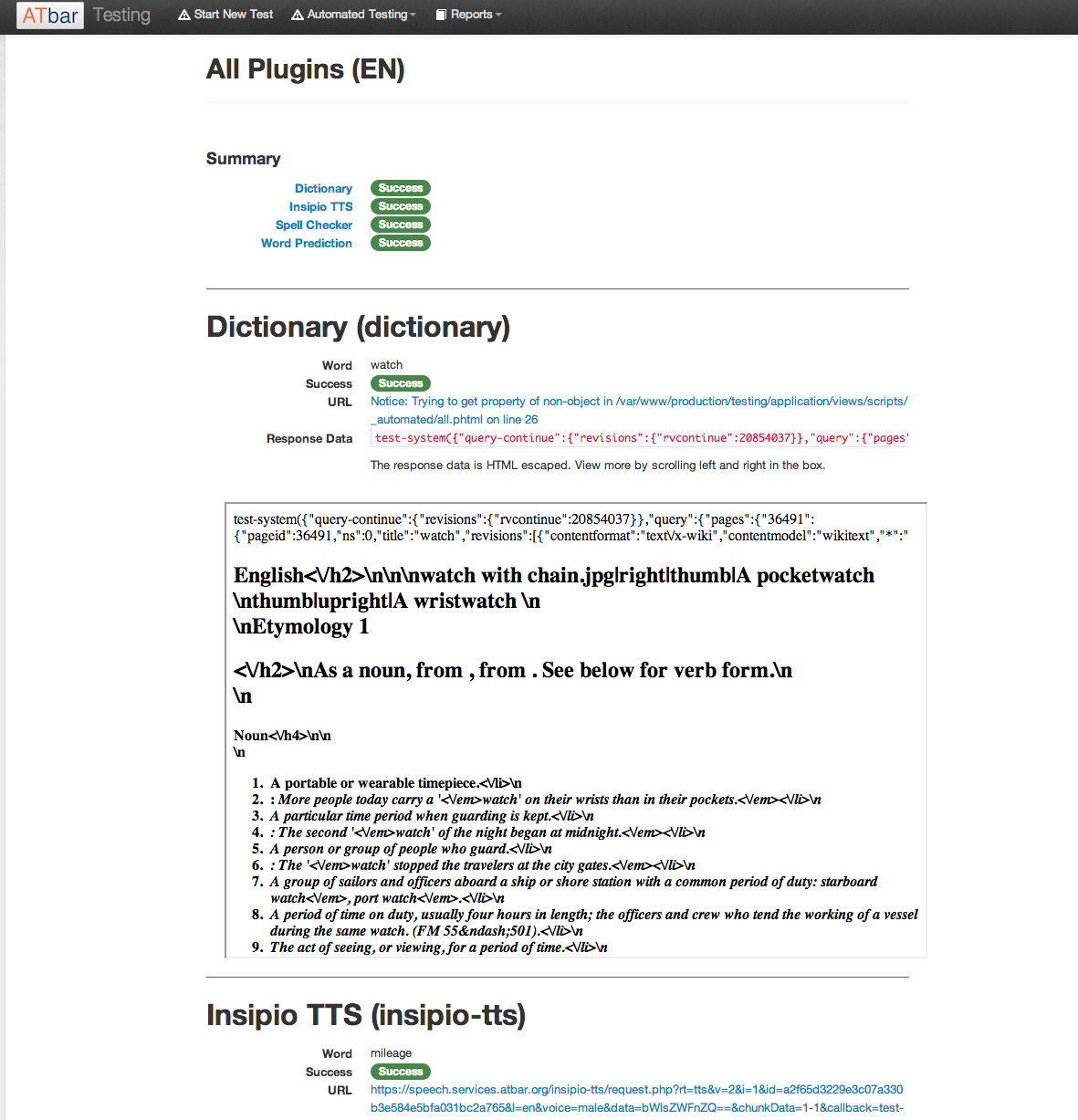

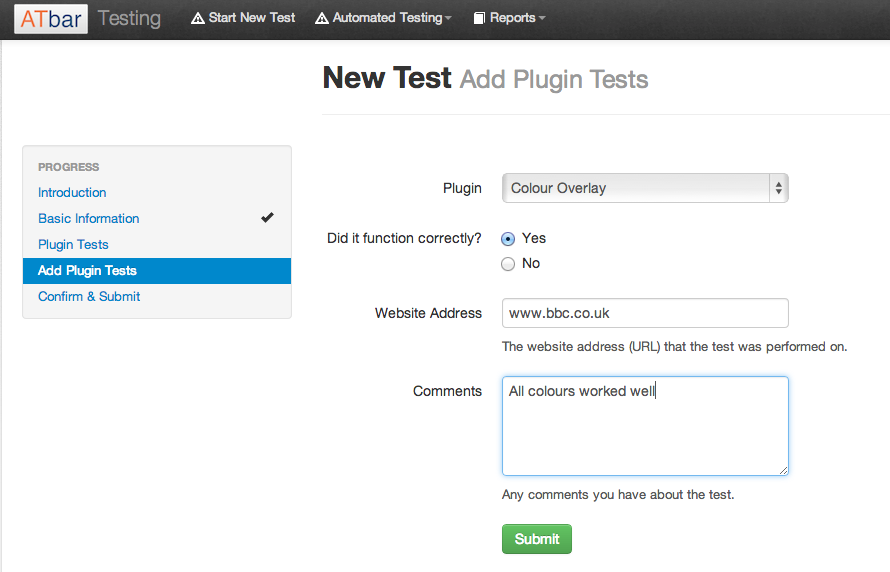

Magnus has developed a testing system that not only allows us to record manual checks but also offers the chance for automated checks to take place on certain plugins. To date these include the following plugins in both languages.

Magnus has developed a testing system that not only allows us to record manual checks but also offers the chance for automated checks to take place on certain plugins. To date these include the following plugins in both languages.

- Dictionary

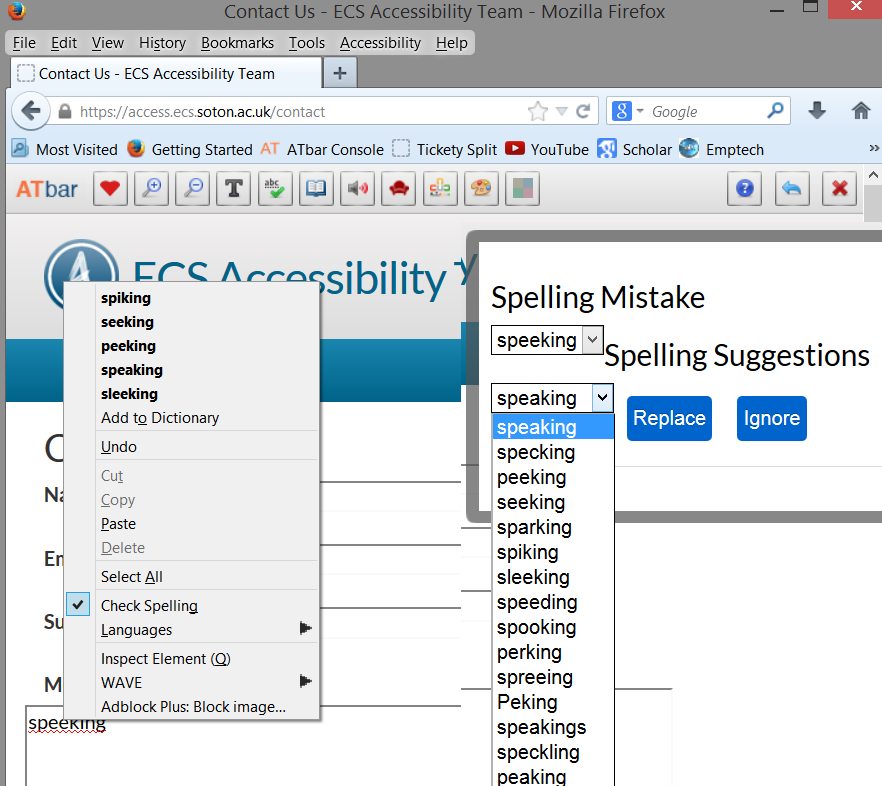

- Spell checker

- Text to Speech

- Word Prediction

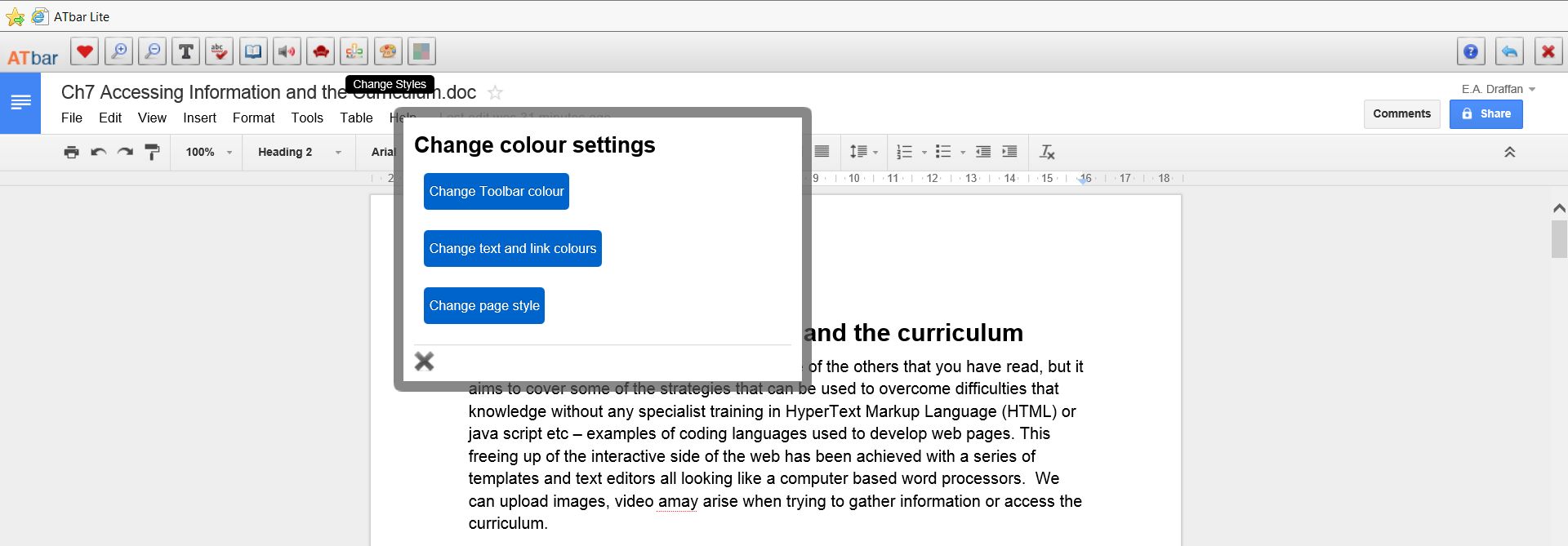

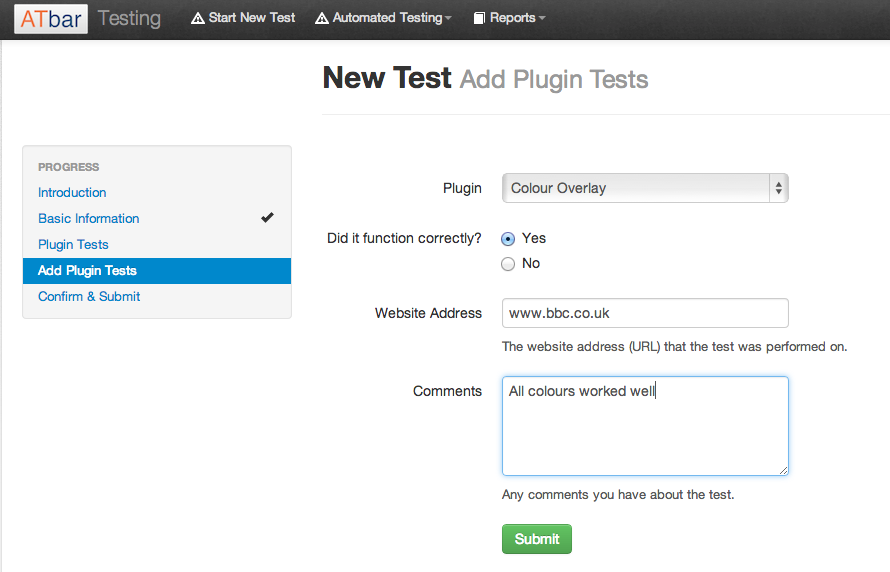

Manual tests are carried out on all plugins that affect the look and feel of the web page as a result of using the ATbar, these include:

- Text resizing

- Font changes

- Readbility

- Style changes

- Colour overlays.

A series of well known websites in both languages are chosen for each test and the results are noted in a form with comments that is submitted to a database. The automated test results are recorded in a similar way.

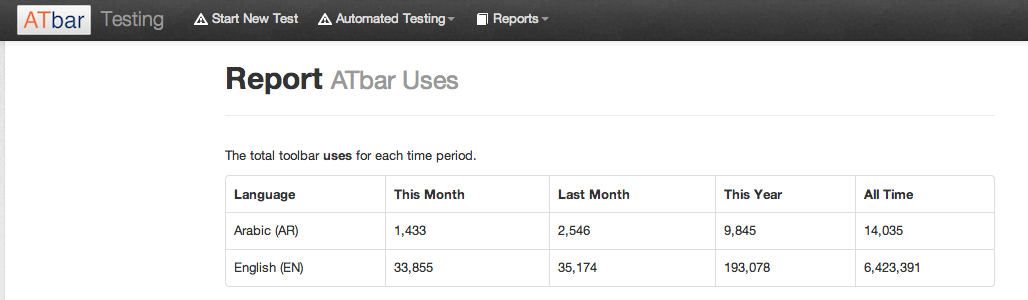

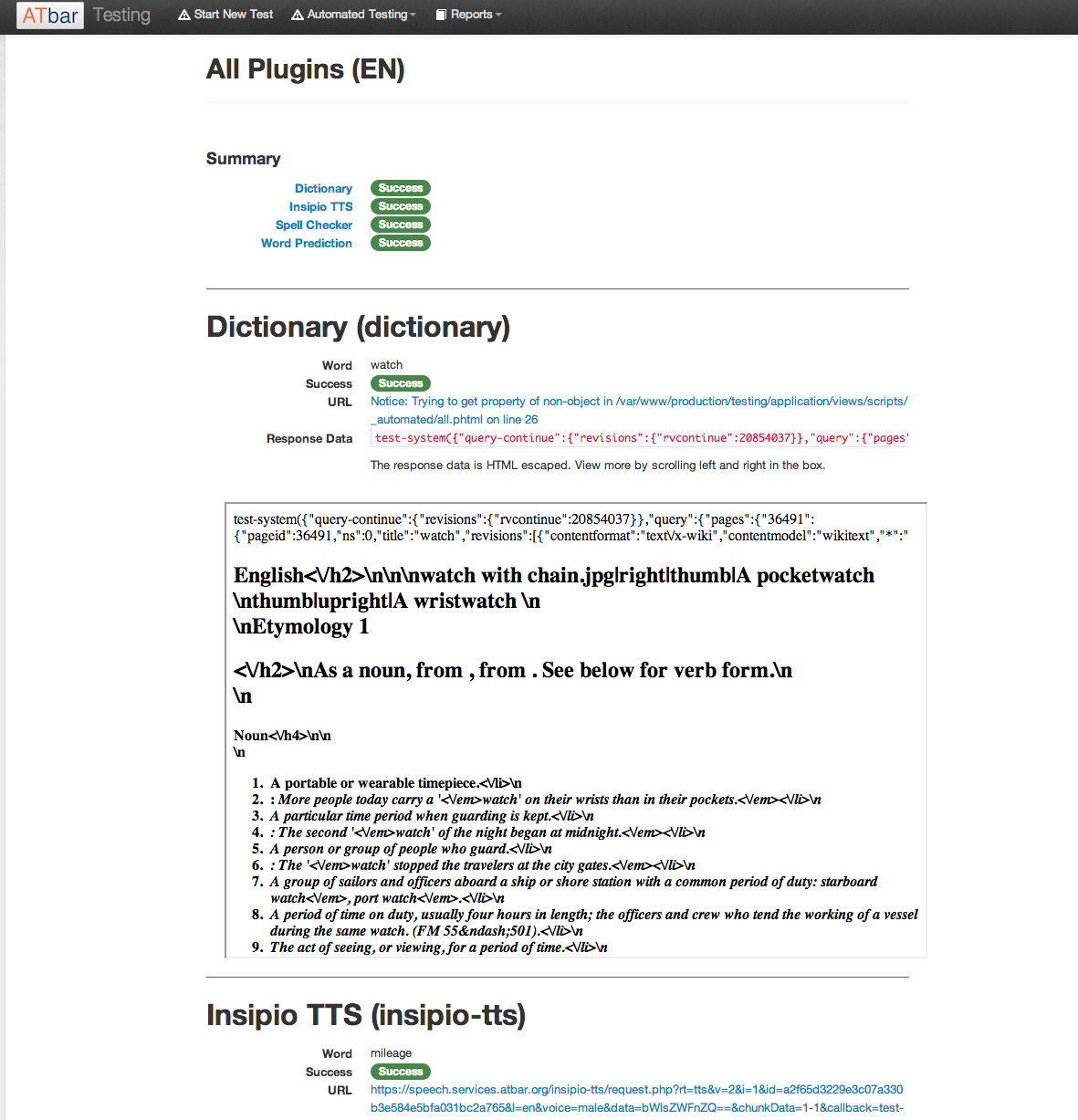

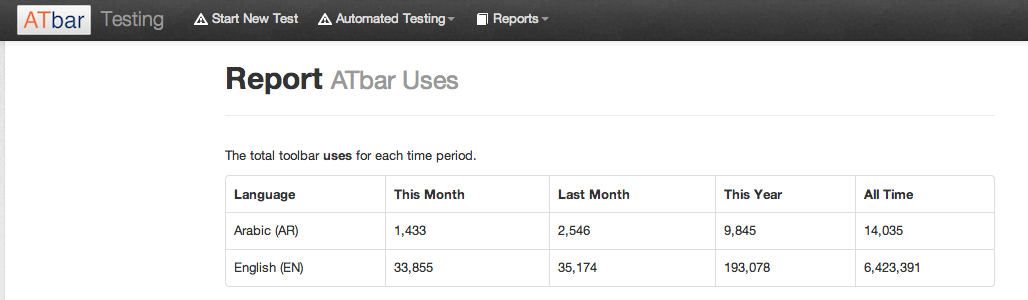

Both manual and automated results can now be seen in a report form alongside the statistics for toolbar uses. The reports are live and can be viewed at any time. The ATbar testing page with report link is available on the services page.

The report showing the toolbar uses currently has monthly, last month, this year and total uses in both languages – these appear as a table and can be printed out.

The report showing the toolbar uses currently has monthly, last month, this year and total uses in both languages – these appear as a table and can be printed out.

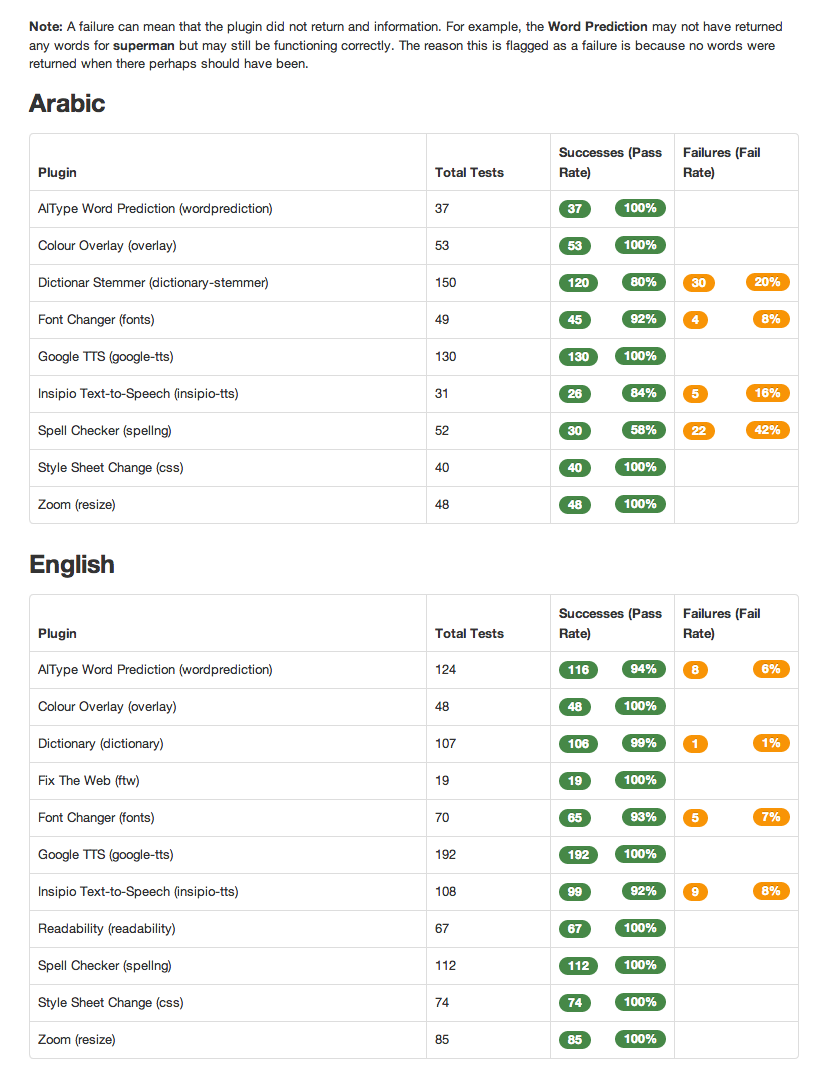

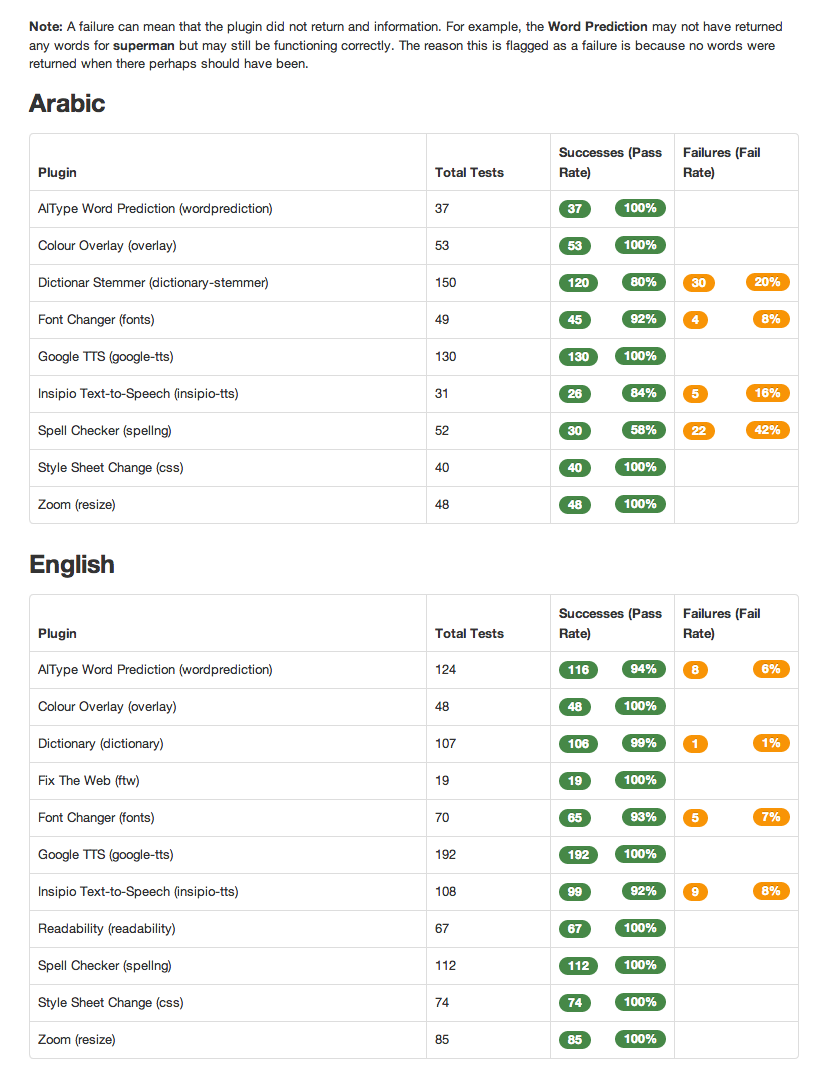

The plugin tests can be seen as a report with each plugin listed on the left of the table with total number of tests undertaken, number of successes and failures plus the percentage success and fail rate.

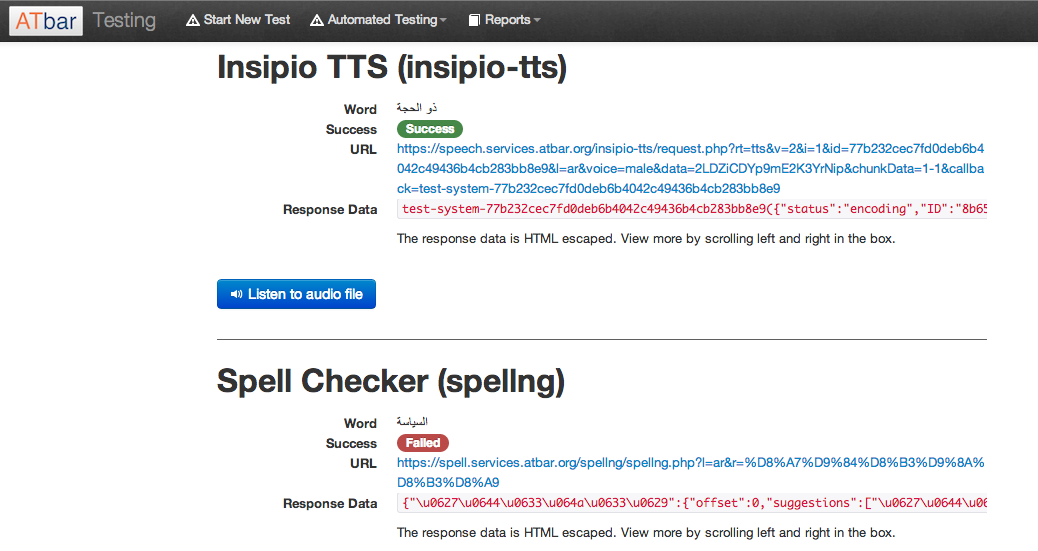

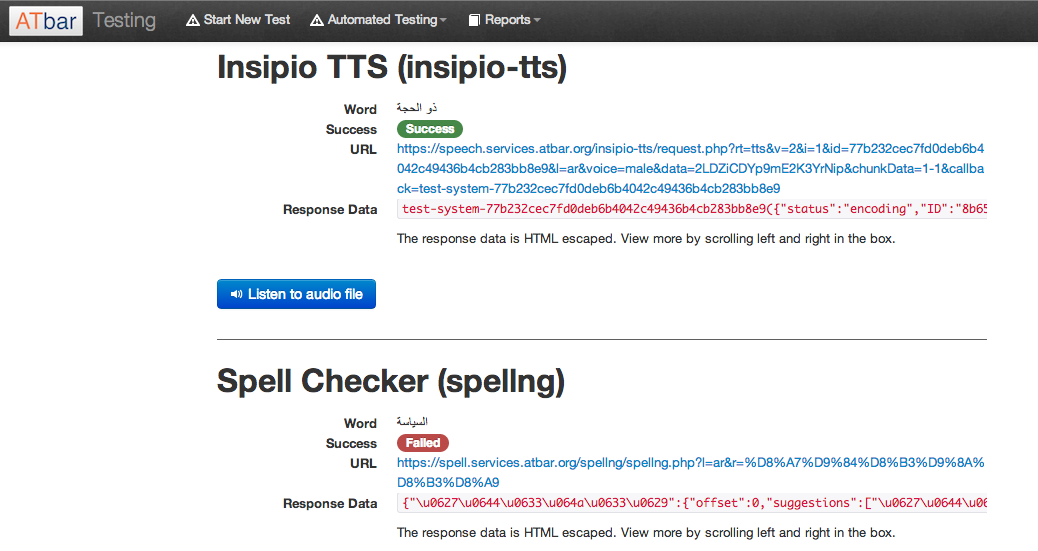

There have been some problems with returning results for the Arabic language due to retrieving UTF_8 characters from the database and encoding them to pass to the services API in order to test some plugins automatically. This is clearly a technical localisation issue and one that needs to be carefully analysed so that it can become part of the localisation framework guidance. Magnus and Russell finally solved the problem by checking the entire stack of dependencies – such as the database, web server, runtime and framework – the solution was changing the default settings in the runtime and framework to enable UTF_8 transfers. These new settings concord with those set in the database, to enable storage of text in the Arabic language. As such, we now support the Arabic language end-to-end, from database level through to the web server and browser.

One of the most vulnerable areas when testing has been the return at speed of the voices for the text to speech. This has been shortened to 2 seconds in recent months but we have noticed some lapses that may be related to the Insipio TTS servers causing intermittent availability as we have been unable to trace any other reasons at the moment. Insipio have always been incredibly helpful when issues have arisen.

Finally the testing system devised is still on trial and there is every intention to make further improvements with more automated tests that will be more accurate. This will allow us to return tests that can tell the difference between a failure and no returned data. Work is on going and it is hoped we will have the new features available soon.

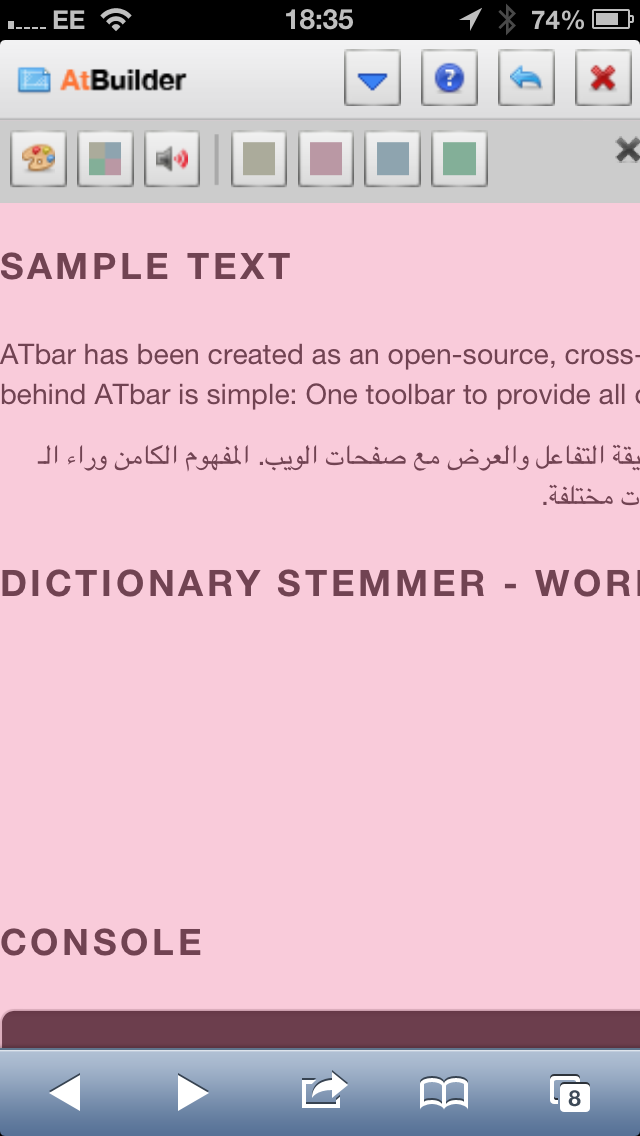

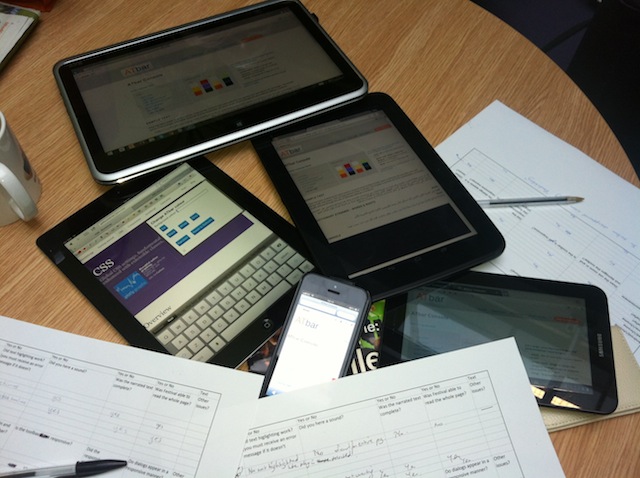

A group of us met up over lunch to test ATbar on a series of portable devices. This was very much a ‘beta’ testing session with critical friends. We filled in a test form and the results were analysed.

A group of us met up over lunch to test ATbar on a series of portable devices. This was very much a ‘beta’ testing session with critical friends. We filled in a test form and the results were analysed.